Flashing images are often the cause of epileptic seizures. When you suffer from epilepsy, you can never really be completely at ease when watching a movie or a series. So what if we could find a solution for this, a way to protect epilepsy patients from potential triggers? Well, maybe there is one in the use of Machine Learning. Our colleague and ML expert William Verhaeghe created a POC that detects flashing images and warns the viewer.

The goal: helping people with epilepsy

Flashing images in movies, series, … can potentially cause seizures for people suffering from epilepsy. So watching a movie can go from very relaxing to extremely stressful in only a second. That’s what we want to tackle with Machine Learning. Detecting flashing images and giving the viewer a warning at the beginning of the movie or some time before the flashing images might occur, can be very helpful. So we started this project with one important goal in mind; preventing seizures that are caused by flashing images/sequences.

Starting off with some research

Before we could set up the ML project, we needed to dive into the facts and figures about epilepsy. We learned that epilepsy affects 1 out of 26 people in the US. Important to know is that many people suffer from seizures due to flashing images, but there are also many people without epilepsy that have to deal with other effects from flashing images, think of headaches, nausea, dizziness, and other discomforts. So it might be beneficial to all of us to know about these flashing images.

Important factors for detecting flashing images

When building this POC for flashing images, there are a few things important to consider and keep track off:

Generally, flashing lights that are most likely to trigger seizures are between the frequency of 5 to 30 flashes per second (Hertz)

Brightness

Contrast with background lighting

Distance between the viewer and the light source

The wavelength of the light

Whether a person‘s eyes are open or closed

Collecting data

To start building the first model, we needed to collect some sample data. This data needed to have the following characteristics:

A video that is processable

A video between 1 and 10 minutes

The data needs to contain very clear targets and other moving frames (so not just a blank image and then flashes, but a valid video and then some flashes)

A good split (~50%) between normal and flashing

Finding the right split is definitely one of the challenges in this project. Ideally, we have a 50/50 split of frames with and without targets, but this means we have to generate a lot of the data. You need to make sure that you use different generation strategies, otherwise, the model will learn and detect our generation strategy and the data won’t be usable.

Since this is a PoC, we decided to only use 1 video that is a combination of two existing videos:

First model ready to be tested

We developed an algorithm that reads a CSV file, imports the listed videos, and combines them with the listed timestamps. This way we detect where the targets are.

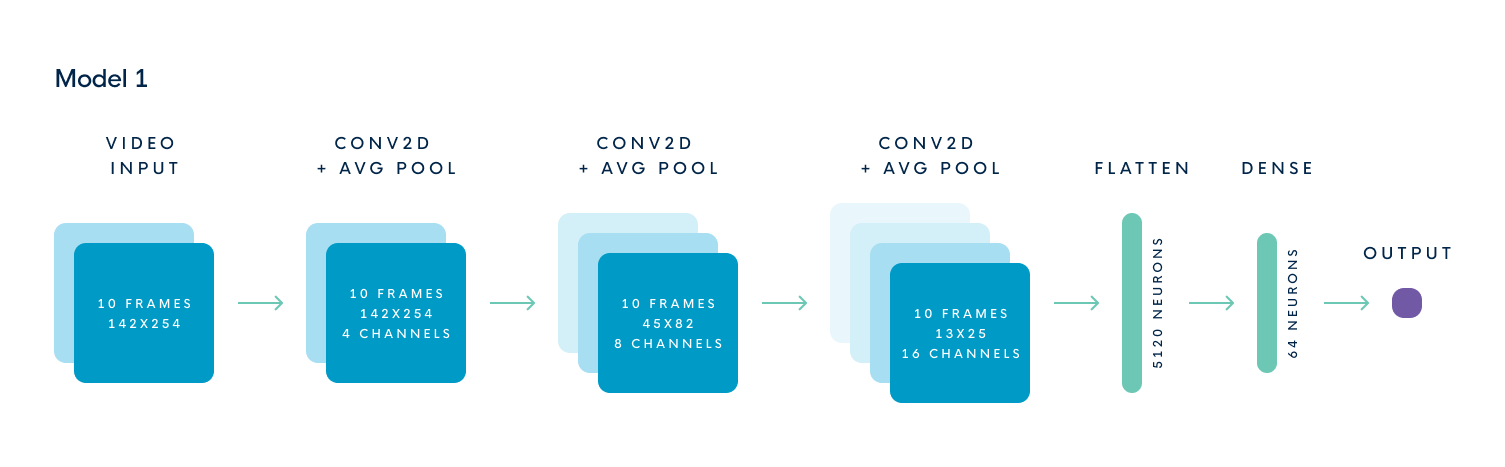

Once we have this in place, we can start to process the video, determine whether the given frame is a target and use this to develop our model. The next step is, of course, the model itself.

During the process, we encountered some issues. Unfortunately, the simple model doesn’t have a great training/validation ratio. The lines should be going in the same direction, up for accuracy, down for a loss. This means that our approach might be a bit too simplistic and we should use a better type of model for this.

LSTM model

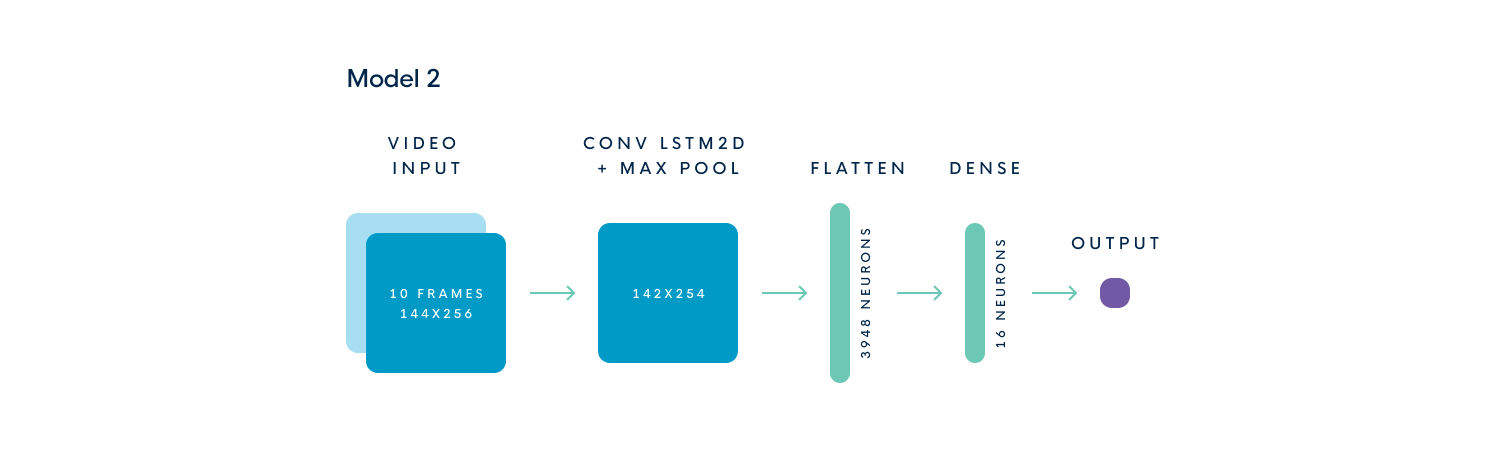

We decided to finetune our first model and fix some issues we noticed while testing. After some fiddling around, we used a ConvLSTM2D layer.

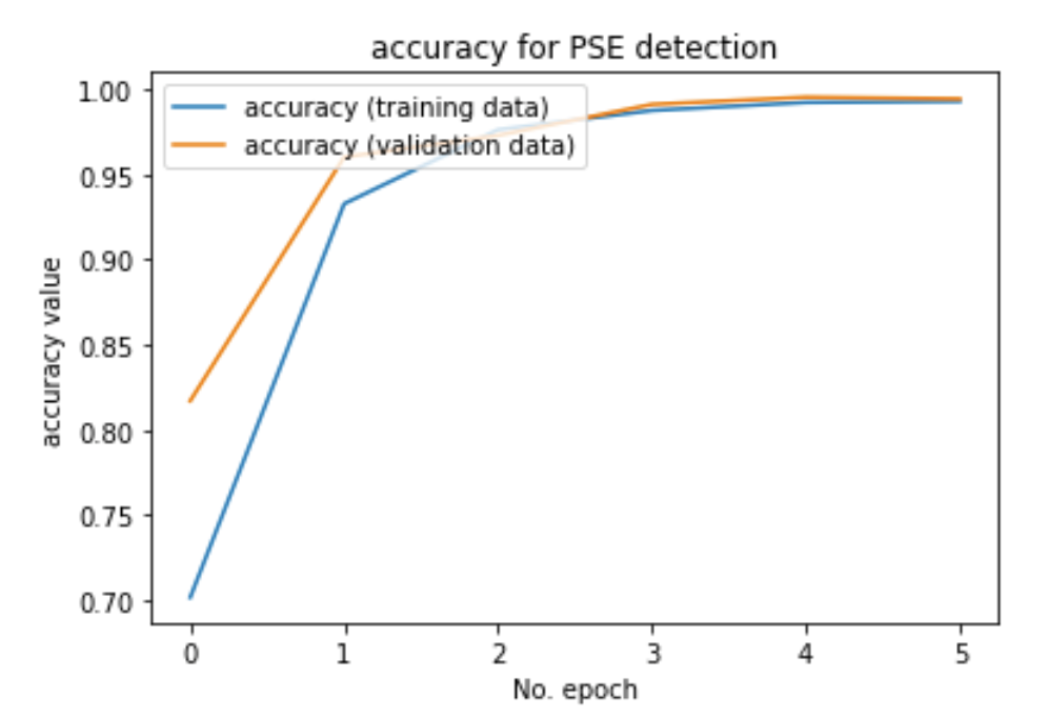

Testing these models showed that the result reaches an accuracy of more than 99% for the training and validation data. When shown a completely different video, we can see that the model needs a lot more training before we can use it in practice, but it has learned and shows promise

Next steps

The next step is to start training with a lot more data. We are talking about hundreds of hours of video, with a lot of flashing parts. Unfortunately, this is out of scope for the PoC as we proved the concept that we can detect flashing images with Machine learning.